Transparency and Accountability Interface (TAI): Explainability, Trust, and Auditability in Ethical-Conscientious Intelligence Systems

Author: Göktürk Kadıoğlu

Keywords: Ethical Artificial Intelligence, Transparency, Accountability, Explainability (XAI), ETVZ, Audit Architecture

ABSTRACT

One of the primary causes of ethical failures in artificial intelligence systems is the black-box nature of decision-making processes. When users, regulators, or society cannot understand “why the system made this decision,” erosion of trust emerges.

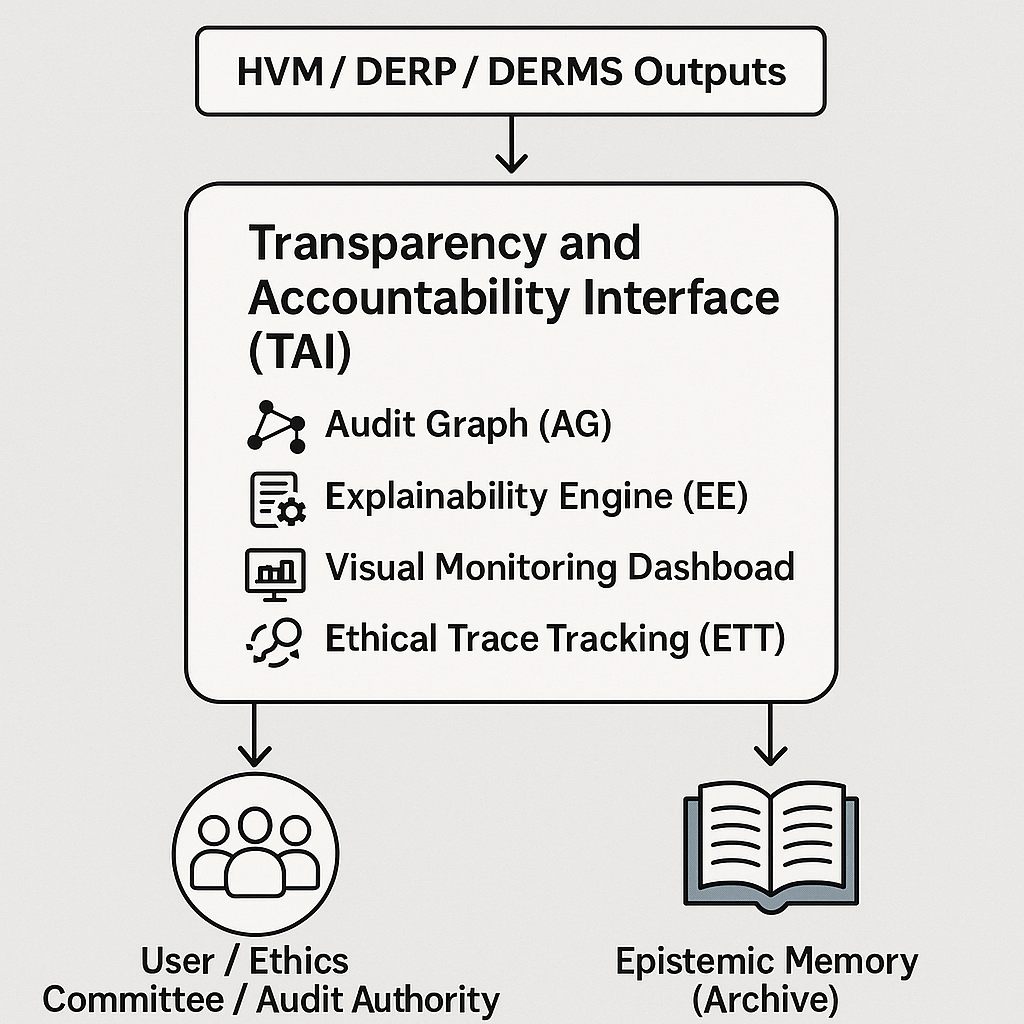

This paper introduces the Transparency and Accountability Interface (TAI) module developed for the Ethics-Based Conscientious Intelligence (ETVZ) architecture. TAI presents the artificial intelligence’s ethical decision chain—together with utilized data, conscientious weights, regulatory references, and human-approval records—in an explainable, traceable, and auditable manner.

The structure, comprising the Neo4j-based Audit Graph (AG), Explainability Engine (EE), Visual Monitoring Dashboard (VMD), and Ethical Trace Tracking (ETT) components, aims to establish ETVZ as a world standard in ethical transparency.

1. INTRODUCTION: THE NEED FOR TRANSPARENCY IN ETHICAL SYSTEMS

In ethical artificial intelligence systems, “the explainability of the decision” is as important as “the correctness of the decision.” The conscientious reliability of a system depends not only on making correct decisions but also on the comprehensibility of why those decisions are correct.

The ETVZ architecture provides a robust infrastructure with HVM (conscience), DERP (law), DERMS (monitoring), and Epistemic Memory (knowledge) modules; however, how these components are communicated to users constitutes a separate problem domain. TAI has been developed in response to this need:

- • Visualizes decision processes,

- • Summarizes the contribution of each module,

- • Generates audit and explanation reports.

2. THEORETICAL FOUNDATIONS AND LITERATURE FRAMEWORK

2.1 The Concept of Transparency

Transparency refers to the comprehensibility of the system’s internal decision logic by external observers. In the context of ethical artificial intelligence, this encompasses the components of explainability and traceability.

2.2 Literature Connection

Contemporary XAI (Explainable AI) approaches—LIME, SHAP, Grad-CAM, etc.—attempt to explain decision networks mathematically. However, in ethical intelligence, explanation must be not only data-driven but also grounded in conscientious, cultural, and legal references. TAI fills this gap: it establishes explanation not merely as algorithmic but as a conscientious cause-and-effect chain.

3. ARCHITECTURE AND SYSTEM DESIGN

3.1 General Architecture

[HVM / DERP / DERMS Outputs]

↓

[Transparency and Accountability Interface (TAI)]

├─ Audit Graph (AG)

├─ Explainability Engine (EE)

├─ Visual Monitoring Dashboard (VMD)

└─ Ethical Trace Tracking (ETT)

↓

→ User / Ethics Committee / Audit Authority

→ Epistemic Memory (Archive)

3.2 Component Descriptions

A. Audit Graph (AG):

On Neo4j, records the trace of each ethical decision (decision – rationale – data – human approval – outcome) in chain form.

Example:

(Decision)-[:RATIONALE]->(Conscientious_Score)

(Conscientious_Score)-[:BASIS]->(Policy_JSON)

B. Explainability Engine (EE):

Generates explanation reports for each decision. Can be in text-based (human-readable) or visual (cause-effect flow diagram) format.

Sample output:

“This recommendation has been accepted by HVM with an ethical score of 0.82. DERP policy ‘Justice-TR-v2’ was referenced, and compliance with KVKK Article 9 was confirmed through the UE module.”

C. Visual Monitoring Dashboard (VMD):

Displays the entire decision chain to users with color-coded flows (green: compliant, yellow: controversial, red: violation risk). Ethics Committee members can intervene directly through this dashboard.

D. Ethical Trace Tracking (ETT):

Maintains version history and human intervention records for each decision. These records are sent to Epistemic Memory to create the “conscientious audit archive.”

4. EXPLAINABILITY ALGORITHM (XAI-ETVZ MODEL)

4.1 Explanation Score (EXS)

EXS = η₁×C_comprehensibility + η₂×C_rationale_consistency + η₃×C_human_traceability

C_comprehensibility: Rate of user comprehension of the explanation

C_rationale_consistency: Overlap rate of conscientious and legal foundations

C_human_traceability: Human intervention recording rate

Threshold Values:

• EXS ≥ 0.80 → High Transparency

• 0.60 ≤ EXS < 0.80 → Medium

• EXS < 0.60 → Low (Audit warning)

4.2 Automated Reporting Flow

1. EE is triggered following HVM decision.

2. EE collects data from AG and ETT.

3. Report is generated and sent to VMD.

4. Report summary is processed into DERP Ledger (hash + timestamp).

5. ETHICAL AND SOCIO-TECHNOLOGICAL EVALUATION

5.1 Ethical Dimension

TAI is the “conscientious conscience” of ETVZ: it continuously compels the system toward self-explanation obligation. Ethical transparency is the foundation of individual and societal trust. When every user can see the logic behind decisions, ethical legitimacy increases.

5.2 Societal and Institutional Dimension

• Provides audit APIs for government institutions and the private sector.

• Academic institutions can utilize TAI reports as data.

• A citizen-level “ethical decision monitoring dashboard” can be offered (e.g., ETVZ.tr/Transparency).

6. PILOT TEST RESULTS

In tests conducted through Vatansoft – ETVZ collaboration in the final quarter of 2025:

Result: TAI integration significantly enhanced the system’s explainability and auditability.

7. CONCLUSION AND FUTURE WORK

The Transparency and Accountability Interface is the conscientious mirror of the ETVZ architecture. This module standardizes “the narratability of decisions” as much as “the correctness of decisions.”

Future research areas include:

• Development of an LLM-based “Conscience Narrator” for natural language explanation generation,

• Establishment of a global transparency network by storing audit graphs on blockchain,

• Making ethical decision reports publicly accessible through open APIs.

TAI is the final link completing ETVZ’s ultimate vision of “conscientious, responsible, explainable intelligence.” Through this, ETVZ becomes not only a system that behaves ethically but one that can prove its ethical behavior.